Our approach at Split for creating statistical measurement is very rigorous and top-tier in the industry. We have two methods to calculate metrics—fixed horizon and sequential testing—and multiple experiment settings that allow users to modify the end results for their needs, maintaining statistical rigor. But one thing we didn’t allow users to do is aggregate information for multiple feature flag versions. If a targeting rule was updated by the user, then all the metrics would get restarted, which could be frustrating. Until now. With the new feature, Custom Analysis Time Frame, users can slice data, rewind measurements, and aggregate the metrics of multiple allocations.

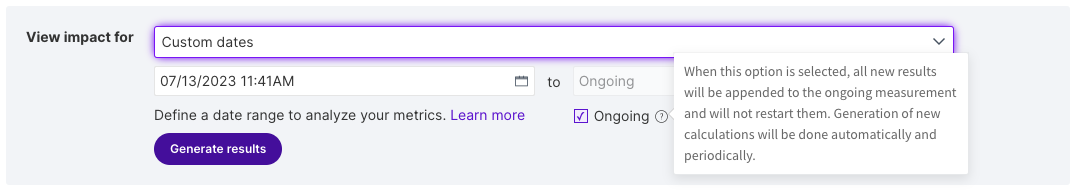

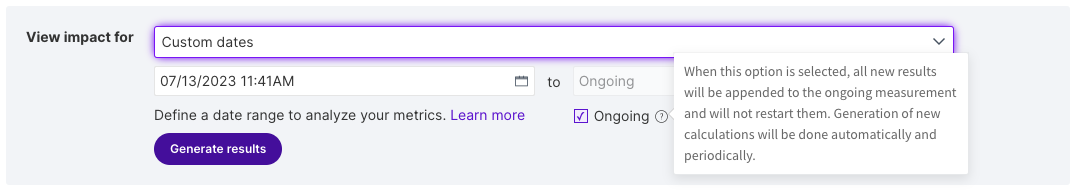

What we have done is create a new approach, more flexible and open, that allows the possibility for a deeper analysis. Users can now select a start date and end date to see the metrics, which grants the option to read just one day, a specific weekend, or any date range inside the 90-day retention period. The possibilities are endless!

Also, it’s now possible to slice unwanted data from an experiment; if some of the information is skewed, just fast forward the start date. Another option is to set the start date but leave the end date open. Now, any changes done in the feature flag definition won’t restart the readings, and information will be aggregated to the ongoing analysis.

But with great power comes greater responsibility. Having the possibility to merge different targeting rules can be flexible, but it also results in unwanted exclusions. If users combine, for instance, Version 1 – 40/60, Version 2 – 30/70, Version 3 – 50/50, and Version 4 – 70/30, then they will be excluding 10% of your users on the first update, then 20%, and then again 20%. Why is this? Because users are seeing a different experience in each bucketing, changing the flag they saw. Our attribution logic excludes users that switch targeting rules once. This is very important to take into consideration; if many changes are done to the targeting rules, and those are combined, then most users will be excluded from the measurements, following the rules of attribution.

So, this feature is not suitable when doing a progressive rollout, when ramping a feature. It’s possible to analyze independently each stage of the ramp-up, but combining all of them will result in a drafting reduction of the sample size of the population of the measurements.

In summary, now with custom dates users can:

- Combine multiple versions and analyze results in aggregate

- Select a start date and leave the end date ongoing

- Select a fixed time in the past, and do deeper analysis on a given timeframe

- Slice your data to remove unwanted information

We can’t wait to see all the new kinds of analysis this will bring. Now, users don’t have to worry about restarting any of their metrics; it will always be possible to combine and slice the data. So let’s get that data flowing!

Get Split Certified

Split Arcade includes product explainer videos, clickable product tutorials, manipulatable code examples, and interactive challenges.

Switch It On With Split

Split gives product development teams the confidence to release features that matter faster. It’s the only feature management and experimentation solution that automatically attributes data-driven insight to every feature that’s released—all while enabling astoundingly easy deployment, profound risk reduction, and better visibility across teams. Split offers more than a platform: It offers partnership. By sticking with customers every step of the way, Split illuminates the path toward continuous improvement and timely innovation. Switch on a trial account, schedule a demo, or contact us for further questions.