Testing in production is becoming more and more common across tech. The most significant benefit is knowing that your features work in production before your users have access. With feature flags, you can safely deploy your code to production targeting only internal teammates, test the functionality, validate design and performance, fix any bugs or defects, and then turn the feature flag on and allow your users to access it already knowing that it works in production.

Summary

Ensure your automation framework makes it easy to write end-to-end tests and has excellent reporting so that the team knows exactly what happens when production testing fails. If you’re using a feature flag management tool you should set it up to mirror your current environment setup. You should decide with your product team which tests will run at which cadence.

Like most developers in tech, we want results fast. The following plan is both guidance and order of operations for what to implement if you want to start testing in production. If your team is still hesitant or fearful, have them watch my video on testing in production.

The Benefits of Testing in Production

Your users are not going to log into your staging environment to use your software, so why do companies use test environments to test their features before release? The answer is that it’s just been the status quo for so long in software development. The norm is to have your developers deploy their code to staging, have the QA team production testing in staging, and then deploy to production after testing. However, what do you do when your staging test results do not match production testing results? What do you tell the QA engineer who spent so much time testing a feature in staging that it broke in production?

Testing in production with feature flags is a safe way to ensure feature functionality in the environment that your features will live in where user experience is paramount. At the end of the day, no one cares if your features are working in staging, they care if they work in production, and the only way to know if it’s working in production is to test it in production.

Comparing Testing in Prod to Testing a in Staging Environment

- Real World User Traffic: Testing in production exposes your software to real-world user traffic, providing invaluable insights that are often difficult to replicate in a staged environment. It allows you to see how your application behaves under real usage patterns.

- Load Testing: Conducting load testing in production offers the advantage of seeing how your application handles traffic spikes and heavy usage in real time to ensure proper load balancing. This is crucial for applications that experience variable load, ensuring they remain responsive under different conditions.

- Performance Tests: Performance tests in production can reveal issues that may not be visible in a staging environment, such as latency problems and resource bottlenecks, due to the interaction with live databases, APIs, and other services.

- Integration Tests: While integration tests are crucial in both environments, in production, they validate the interaction between your application and external services under real operational conditions, ensuring all components work harmoniously.

Testing in a Staging Environment

- Controlled Conditions: A staging environment allows for testing in a controlled, stable setting that mirrors the production environment but without affecting real users. This is essential for thorough testing before deployment.

- Unit Tests and Integration Tests: Both unit tests, which test individual components for correct behavior, and integration tests, which ensure that all parts of the application work together correctly, are ideally conducted in a staging environment. This allows testers to isolate and fix issues before they impact users.

- Load Testing and Performance Tests: While these tests can also be conducted in staging, the predictability of a controlled environment might not accurately capture how the application performs under unpredictable real-world conditions. However, staging allows for identifying and mitigating potential performance issues early.

- Testers: Staging environments are predominantly the domain of professional testers who can extensively test and debug issues before any code reaches the production stage. This helps in ensuring that only well-tested and verified changes make it to the live environment.

Testing in production with feature flags makes code changes, rollbacks and the development process easier for software engineers. Even enabling modern methodologies like continuous delivery.

30-60-90 Day Implementation Plan

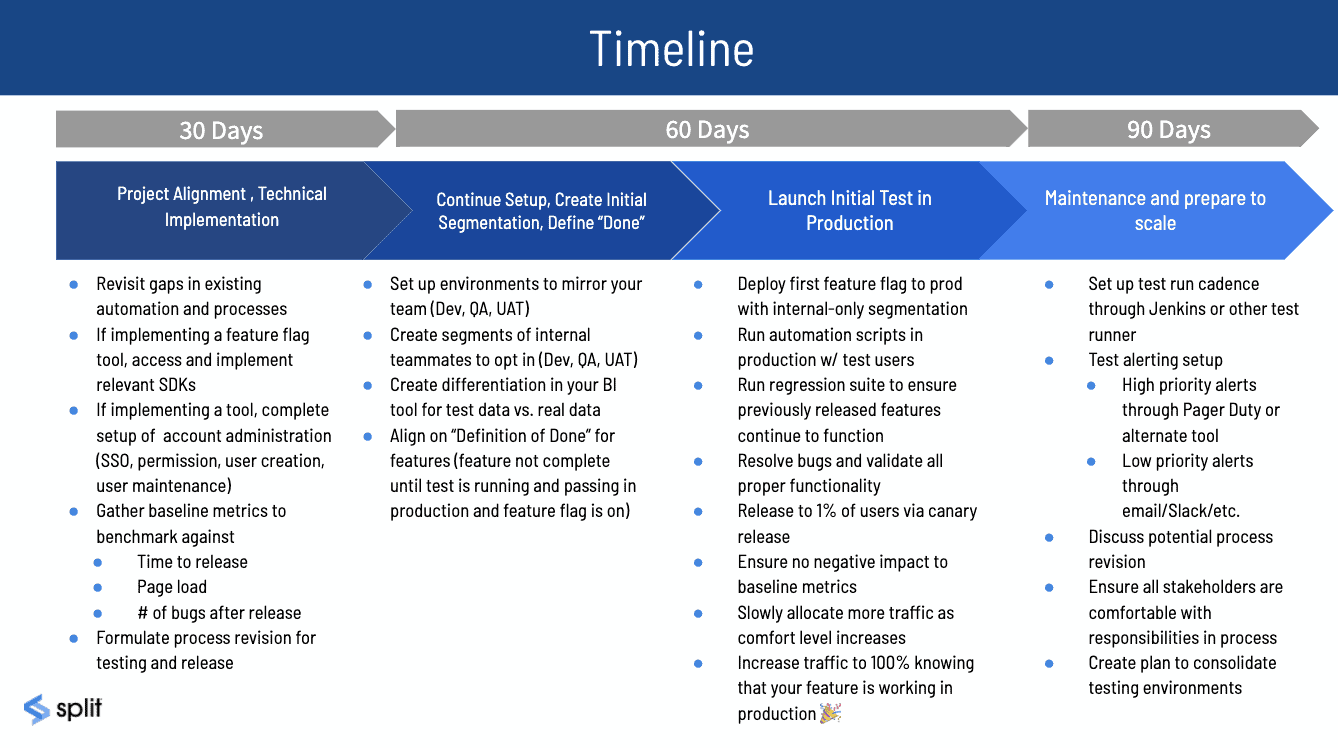

So you’re ready to implement, now what? With this sample 30-60-90 plan, you can be testing in production in just 90 days. For more complex systems or larger teams, you can expand the timeline, or for the smallest of orgs, you might be able to work through everything in the first 30!

The First 30 Days

The focus of the first 30 days should be project alignment and if you’ve chosen to implement a feature flag management tool like Split, education. This is when you hammer out the details that will make testing in production work for your team. In the first 30 days, it’s essential to revisit the team’s automation framework that’s in place to make sure it is easy to use and implement. If your organization is having trouble with their existing automation framework, it will be a roadblock in the future, especially when in a testing in production environment. Ensure your automation framework makes it easy to write end-to-end tests and has excellent reporting so that the team knows exactly what happens when production testing fails.

If you’re onboarding a tool to manage your feature flagging and experimentation, like Split, your next step is to go through the administration of said tool. This can include setting up SSO, permissions, user creation, and user maintenance. Once you have these set up, you are ready to implement the appropriate SDK. (Check out Split’s SDKs here.)

During this phase, it is also important to gather baseline metrics for benchmarking. These metrics can include things like time to release, page load time, percentage of bugs in production vs. staging, percentage of bugs found before release vs. after release, etc. Once you have these baseline metrics, you have a standard to compare any changes against. After you release a feature, you can measure its performance and make any necessary process improvements.

Days 30-60

In the next 60 days, if you’re using a feature flag management tool you should set it up to mirror your current environment setup. For example, if you currently have Dev, Test, QA, UAT, and Prod in your SDLC, you should have those accurately reflected in your tool. These environments should mirror your current environment setup in your application. (Eventually, you will only have production and dev here to mirror true testing in production setup). Once you have the environments set up, you should add segments of teams to individually target in each environment. This can be done in the ‘Individual Targets’ Section of your feature flag configuration. For example, if your product team currently validates features after releasing to production, you can add a segment for the product team, and add them to the individual targets in the production environment. This means that while the feature flag is still off, that team will still have access to the feature.

Another important step, regardless of whether you’re using a tool or not, is to differentiate between test data and real data in production. One way to do this is to have a boolean set up for your test entities in production. With Split, you can use is_test_user = true for the test users and is_test_user = false set automatically for real production users. In your BI tool (Datadog, Looker), you can create a separate database for all of the test users’ activity so that you can make business decisions based on real user data, not from data from your automated tests in production.

Alignment on your team’s definition of done is a crucial pillar for success and should happen in this phase. Your entire team should agree that a feature is not considered “Done” until the tests are running in production, and the flag is on for 100% of the population.

Run Your First Test in Prod — Days 60-90

The last phase of your implementation plan is where we get to the fun stuff, your first real test in production. You will deploy your first feature to prod with the default rule off for safety, meaning that only the targeted users will have access to the feature. This can be set up through your feature flag configuration in Split. Then, you will run your automation scripts in production with the test users that you’ve targeted, as well as the regression suite to ensure previously released features continue to function normally. In this time when the feature flag is off and only your targeted team members have access to the feature, you will be testing in production. You will resolve any bugs and validate all proper functionality. Keep in mind that if anything does go wrong, there will be no impact to your end-users because they don’t have access yet.

Once you have confidence that the feature is working properly and you resolve all issues, you then release the feature to 1% of the population through a canary release. With Split’s percentage rollout allocation, you can easily allocate a specific percentage of users to have access to your new feature. As your confidence grows and you monitor your error logs, you can slowly increase that percentage until your entire user base has access to it. Via Split monitoring, you will be able to ensure that there is no negative impact to your baseline metrics, and you can slowly allocate more traffic as your comfort level increases. Finally, you will turn the default rule on already knowing that your feature is working in production.

Once you hit the 90-day mark, you should have a regular test cadence in place. You should decide with your product team which tests will run at which cadence. For example, you can have your high priority test suite run hourly, and a lower priority production testing suite run nightly. You should also have alerting set up for each test so that if a test fails for any reason, you will get alerted and be able to analyze as quickly and efficiently as possible.

At this point, its a good idea to do a retrospective of the process with your team and figure out what worked well and what part of the process needs improvement. Aligning on all the different stakeholders’ roles and responsibilities is imperative for optimal performance.

If you’ve implemented your feature with Split, you’ll have access to our technical documentation and support team throughout the entire onboarding process, and we’re always excited to lend a hand.

Test in Production, Now and Always

Hopefully, I have reduced the burden of creating a testing plan from scratch with this guide. Now you can set up tests in production in as little as 90 days! Remember that most of the pushback of a testing in production environment comes from fear – fear of impacting your user base, fear of impacting real data in the live environment, and fear of generally messing everything up in production. This fear and these risks can all be mitigated with feature flags. When implemented correctly, feature flags open the door to so many possibilities, not only testing in production, but canary releases, too. Don’t even get us started on the pre-production environment.

Learn More About Testing in Production and Feature Flags

Testing in production can be overwhelming when you don’t have a plan. With this implementation guide and the following resources, you’ll be on your way to testing like a pro no matter the use case.

- Read about the 5 Best Practices for Testing in Production

- If you’re having trouble convincing your team to test in production, link them to our Breakup Letter to Staging

- Learn more about The Benefits of Feature Flags in Software Development

To stay up to date on all things testing in prod and feature flagging, follow us on Twitter @splitsoftware, and subscribe to our YouTube channel!

Switch It On With Split

The Split Feature Data Platform™ gives you the confidence to move fast without breaking things. Set up feature flags and safely deploy to production, controlling who sees which features and when. Connect every flag to contextual data, so you can know if your features are making things better or worse and act without hesitation. Effortlessly conduct feature experiments like A/B tests without slowing down. Whether you’re looking to increase your releases, to decrease your MTTR, or to ignite your dev team without burning them out–Split is both a feature management platform and partnership to revolutionize the way the work gets done. Schedule a demo to learn more.

Get Split Certified

Split Arcade includes product explainer videos, clickable product tutorials, manipulatable code examples, and interactive challenges.