Peak traffic refers to a temporary period of time during which a website or application sees higher volumes of traffic than the remainder of the year. This is often associated with holiday shopping, seasonal sales, annual enrollment periods, or a big event, like Taylor Swift “breaking the internet” with her concert sales.

Why Run Experiments During Peak Traffic?

Possible short-term loss from learnings could lead to big wins in the long run. Hesitancy with testing is often due to perceived financial risk or operational risk. But refusing to test means you could miss out on a prime time for quick test completion, which could result in actually losing money.

The benefits of running experiments during peak traffic are many. It allows you to analyze unique customer behaviors, optimize faster due to increased sample size, apply more granular targeting, and maintain the momentum of your experimentation program. High-traffic tests also allow engineering teams to detect issues under high load.

Still, several considerations come into play. For instance, demographics and needs of users may be different during peak vs. non-peak periods. Also, you don’t want to apply learnings year-round casually. For instance, user behavior may be different during peak periods due to:

- Higher sense of internal urgency

- Competing factors (e.g., shipping speed vs. product savings)

- A different traffic mix or ad spend

How to Execute

Risk and opportunity are two sides of the same coin. However, A/B testing can tip the scale by reducing risk while exploring opportunities.

Here are 8 tips for running experiments during peak traffic:

1. Prioritize Evidence-Based Hypotheses

Eliminate wasteful testing.

- Focus on relevant problems to surface the most promising ideas.

- Make more accurate predictions about how users will respond to changes. After all, big wins during peak traffic can help sell the benefits of testing to the rest of the organization.

- Classify ideas into two categories to optimize your future decision-making process: hypotheses that likely only apply during the peak period vs. hypotheses that may apply during peak and non-peak periods

- Avoid spending time on changes that are unlikely to have a significant impact.

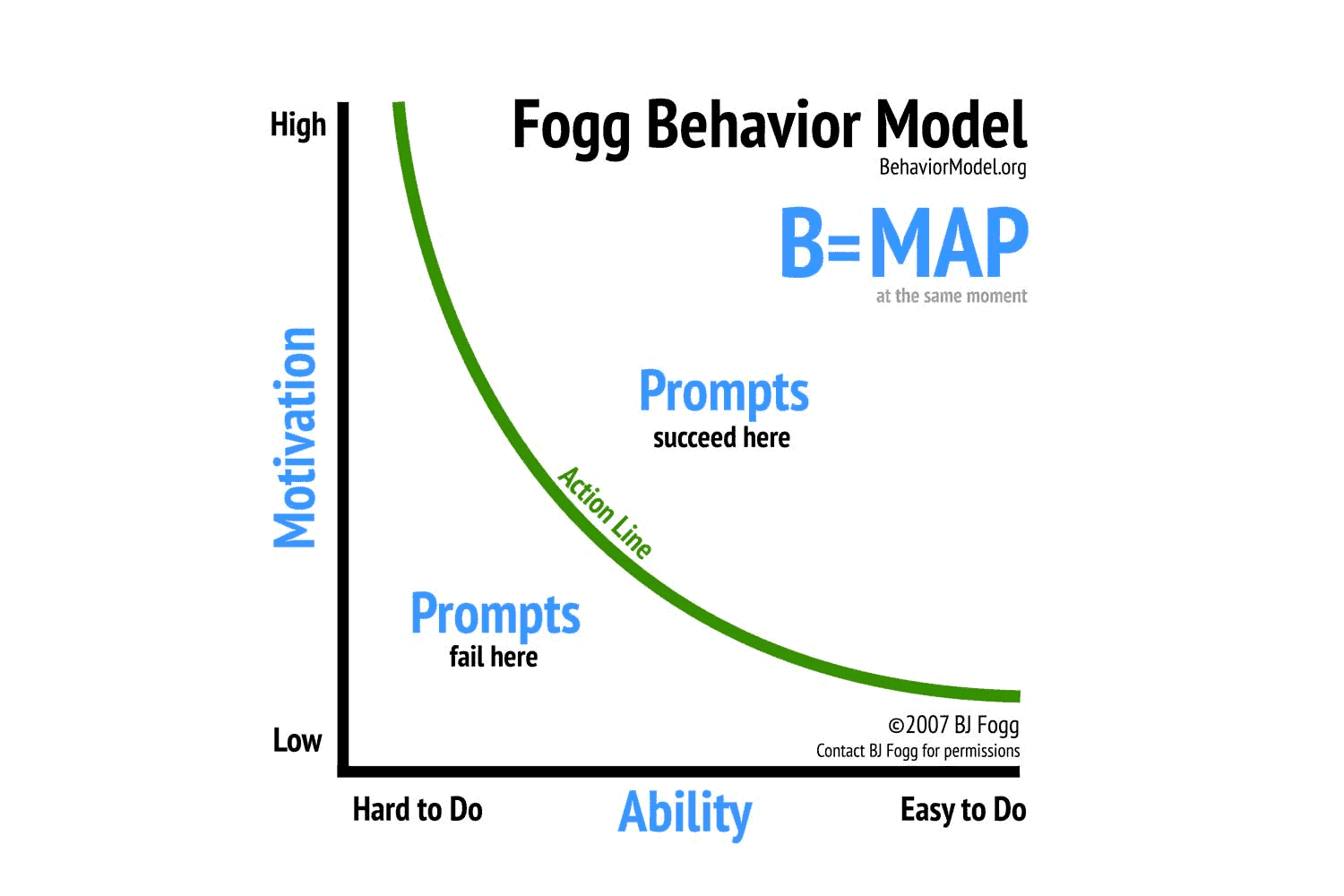

2. Consider the Fogg Behavior Model

Ensure appropriate motivation, ability, and prompts exist.

- Align these three elements at the same moment to produce a target action (e.g., conversion).

- Focus more on emphasizing core user motivations during peak traffic—less on usability—since users are highly motivated during these periods (e.g., fast shipping to arrive in time for a holiday).

- Use qualitative research to understand what drives user decisions.

3. Set Up a Gradual Rollout

Minimize your blast radius.

- Analyze anticipated traffic volume based on historical data and trends.

- Determine the percentage of traffic you are comfortable running experiments with at launch.

- Limit user bucketing when running experiments by using a traffic allocation tool.

4. Apply Targeting and Exclusions

Manage test exposure and personalization.

- Exclude high-value users via targeting rules if risk is a concern.

- Consider personalization needs based on demographics, geolocation, language, device type, and other attributes.

- Avoid targeting that is too granular: There is a need to maintain some randomization for statistical rigor.

5. Collaborate Across the Organization

Ensure all teams are in sync with the experiment.

- Align on business goals: Results should be relevant to the overall success of the company.

- Conduct a thorough risk assessment, as different teams bring different perspectives.

- Maintain clear communication and coordination between teams during the rollout (e.g., engineering, product, marketing, customer support, etc.).

- Avoid conflicting experiments

6. Create a Launch Strategy

Answer the questions “who?,” “what?,” and “when?”

- What are the launch roles and actions, including a potential rollback plan?

- When does each action need to occur?

- Who will perform them?

- What tool(s) will be used?

- What are the conditions that will trigger a rollback?

- Who needs to be informed about any changes?

7. Monitor Results Closely

Utilize alerts to configure degradation thresholds for your key metrics.

- Agree on the key metric(s) when designing the experiment.

- Set up automated alerts for these metrics (and others, where applicable) to monitor performance instabilities beyond a designated level.

- Gradually roll out the experiment: Don’t look for statistical significance while ramping; just monitor for red flags.

- Use graphs and data tables to look for potential outliers.

8. Retest During Non-Peak Period

Determine whether hypotheses apply outside of peak periods.

- Decide which experiments may also apply during regular business periods.

- Monitor the experiment using the same key metrics.

- Pay close attention to any differences between the results obtained during peak periods and non-peak periods.

- Formulate new hypotheses for non-peak periods based on the conclusions drawn.

With these steps, you can successfully run experiments, even when your site is selling hotcakes like…hotcakes. By instilling a culture of collaboration, monitoring, and strategy, peak traffic won’t stand in the way of great tests.

For more info, check out the following blogs!

Get Split Certified

Split Arcade includes product explainer videos, clickable product tutorials, manipulatable code examples, and interactive challenges.

Switch It On With Split

Split gives product development teams the confidence to release features that matter faster. It’s the only feature management and experimentation solution that automatically attributes data-driven insight to every feature that’s released—all while enabling astoundingly easy deployment, profound risk reduction, and better visibility across teams. Split offers more than a platform: It offers partnership. By sticking with customers every step of the way, Split illuminates the path toward continuous improvement and timely innovation. Switch on a trial account, schedule a demo, or contact us for further questions.