Split’s Feature Data Platform

Split’s Feature Data Platform™ combines capabilities for feature delivery and control with built-in tools for measurement and learning.

The platform is backed by a patented Attribution Engine that joins feature flag data with performance and behavioral data, empowering teams to speed up releases, mitigate risk, and maximize business outcomes for every application change.

INSTANT FEATURE IMPACT DETECTION

Split pairs feature flags with performance and behavior data. From page load times to errors and shopping cart values, Split immediately calculates the impact of new features on every metric of every rollout. We call this Instant Feature Impact Detection (IFID). With pinpoint precision, IFID helps you quickly catch issues that affect your application. Not even APM tools can detect failures like IFID can. Now you can know with certainty if the software rollout should progress to 100% or be turned off.

Feature Measurement & Learning

Know When It’s Safe to Release or Not

When you move from “all or nothing” releases to gradual rollouts with feature flags, you’ll need a better way to detect issues. Looking for big changes in your APM graphs is risky and inefficient.

Split’s automated rollout monitoring is a component of Instant Feature Impact Detection (IFID), so you can take action early in a gradual rollout, long before most users are impacted.

Get Alerts, Eliminate Fire Drills

If something goes wrong, Instant Feature Impact Detection (IFID) catches it, pinpoints the exact flag causing the issue, and immediately notifies the team responsible.

If you do have a failed feature, it’s easy to disable it with one click. No need to worry about complicated rollbacks.

Instant Feature Impact Detection (IFID) is the Gateway to Experimentation

Feature flags? Check. Data? Check. We’ve got all the ingredients you need to A/B test new features and measure impact.

Split randomizes users, automatically attributes data, and calculates impact for every feature you put behind a flag. All you have to do is focus on the results.

Automatically Capture Performance Data

Split Suite captures performance event data automatically on web and mobile. I, and it’s the most efficient way to get started with Instant Feature Impact Detection (IFID). Wherever you use the Split SDK, simply use Split Suite instead. There’s no need for additional RUM agents, track calls, or integrations.

Leverage Your Existing Data With Ease

Import data from your data pipeline to unlock monitoring, alerting and experimentation. Easily use existing data from Twilio Segment or mParticle CDPs. Send bulk events via S3 buckets or to our API. Export impressions (records of flag evaluations) and audit log entries for downstream analysis.

Feature Delivery & Control

Go From Code to Customer Fast

With feature flags, you can deploy code when you want and release when you’re ready.

Gradually release with targeting rules, not deployments. Manage complex releases with less stress.

Practice trunk-based development, where unfinished code is merged daily behind a flag that is off by default. Say goodbye to painful merge conflicts lurking in long-running branches.

Targeted Rollouts Let You Learn Without Getting Burned

Once you are ready to release, Split’s sophisticated targeting makes it easy to test in production and then release a feature to segments of your user base.

Make Changes Without Pushing New Code

Dynamic configurations alter feature attributes on the fly, in production, and without code changes.

By abstracting changes from the code, you can also allow any approved team member (a.k.a., a product manager) to adjust feature attributes.

Keep All Tools, Processes, and People on the Same Page

Seamlessly coordinate across teams, schedule releases, manage technical debt, standardize approval flows, and ensure visibility among the enterprise with Split’s collaboration and workflow capabilities.

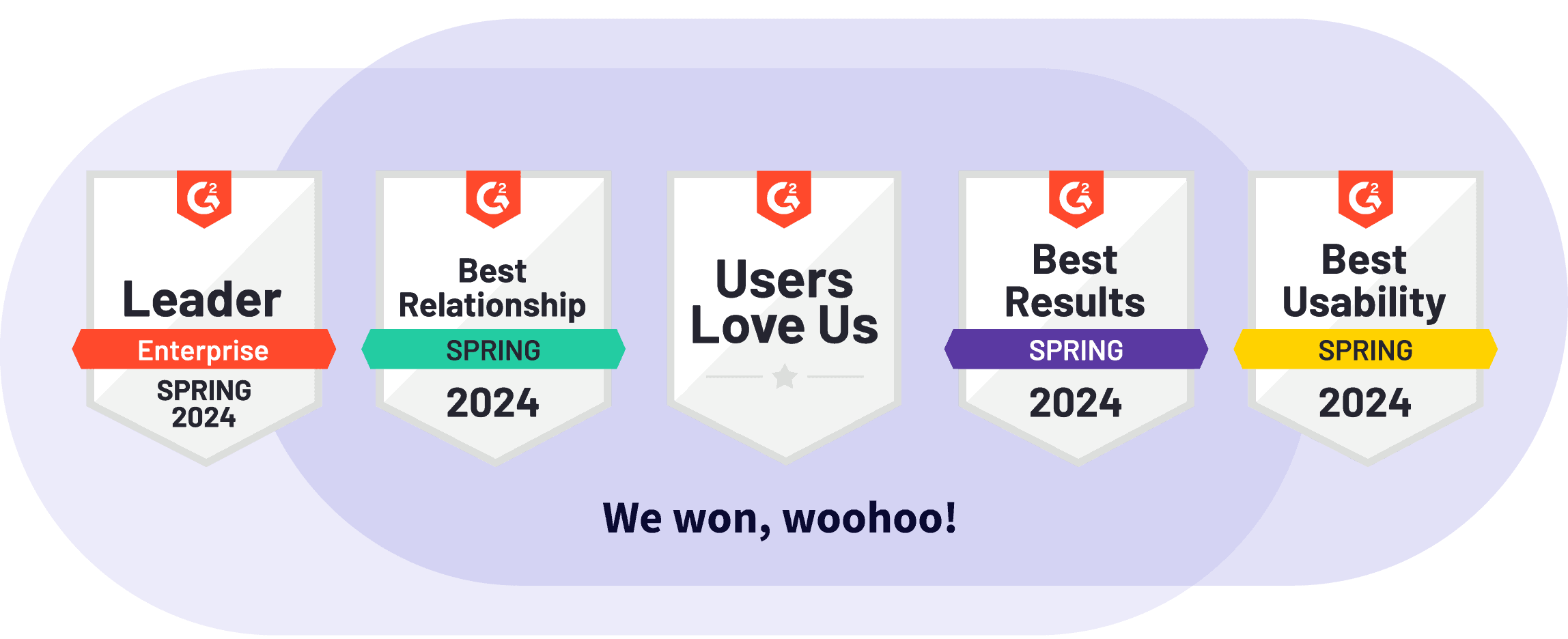

CUSTOMERS RAVE ABOUT OUR PLATFORM

Enterprise-Grade Capabilities

If your organization is complex and highly regulated, it’s all about capabilities that ensure governance, flexibility, and automation of processes.

With flexible deployment options, role-based access control, approval flows, private workspaces, and audit logs, Split is designed to match the needs of enterprise teams at scale.

Architected for Performance, Security, and Resilience

Split’s streaming architecture pushes changes to its SDKs in milliseconds.

The SDKs evaluate feature flags locally, so customer data is never sent over the internet.

Our SaaS app, data platform, and API span multiple data centers. Plus, our SDKs cache locally to handle any network interruptions.

SWITCH IT ON

Ignite features that matter fast with the Split Feature Data Platform.