Inputs account for a special class of interaction that prompts a user to either make a selection or provide text. These include entry fields and forms, radio buttons, checkboxes, sliders, dropdowns, and any other selection means. The inputs will then either directly modify the page or be submitted for processing or storage by a backend service.

Submission can be triggered through a button click, at which point the state or contents of the input elements can also be tracked. Be very conscious of the type and sensitivity of those inputs; some fields provide data that is essential to track (such as those involved with satisfaction surveys), while other data should be kept out of monitoring systems for privacy.

Event Tracking

Tracking inputs can be split between users’ interaction with form elements and the final submission of that data. If there is input validation set up, it is helpful to also trigger on rejected inputs to understand better where it may be catching bad inputs or causing issues for customers. It is worth noting that inputs can be a very high volume source of tracking traffic, so it helps target individuals for tracked elements.

Form Exposure

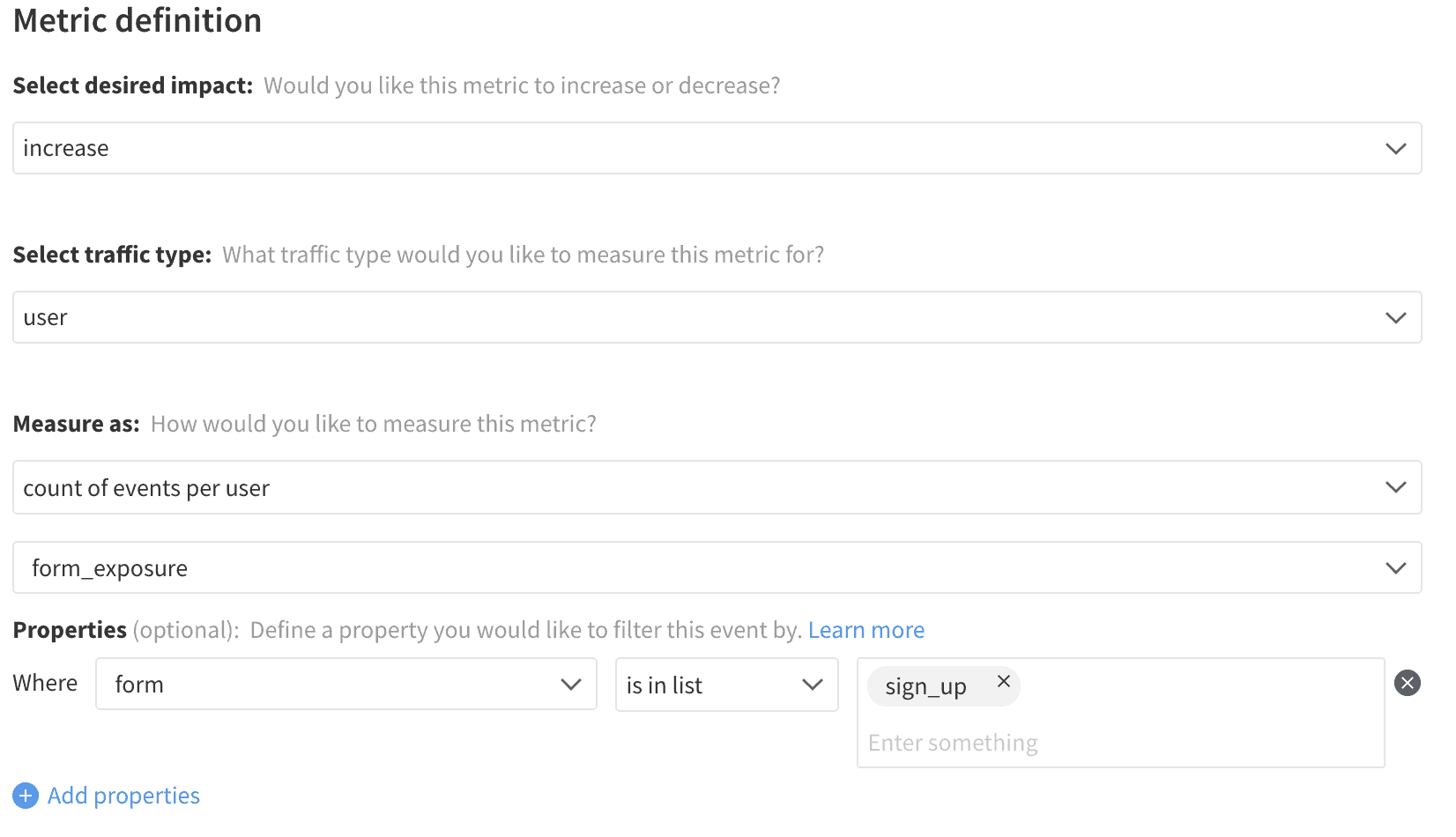

In most cases, a page view event for the location where a form or input lives is sufficient to indicate that a customer’s exposure to that form. There are cases where the same set of inputs may live on multiple landing pages or where they are presented as a modal or pop-in on multiple pages. In those cases, the application can either explicitly fire the exposure event as part of the logic that shows the form or maintain standardized JavaScript to look for form elements on the page and fire an exposure event when one exists. The identifier of that form should be included in that event to allow metrics to tie back between the exposure and the submission or input events.

Input

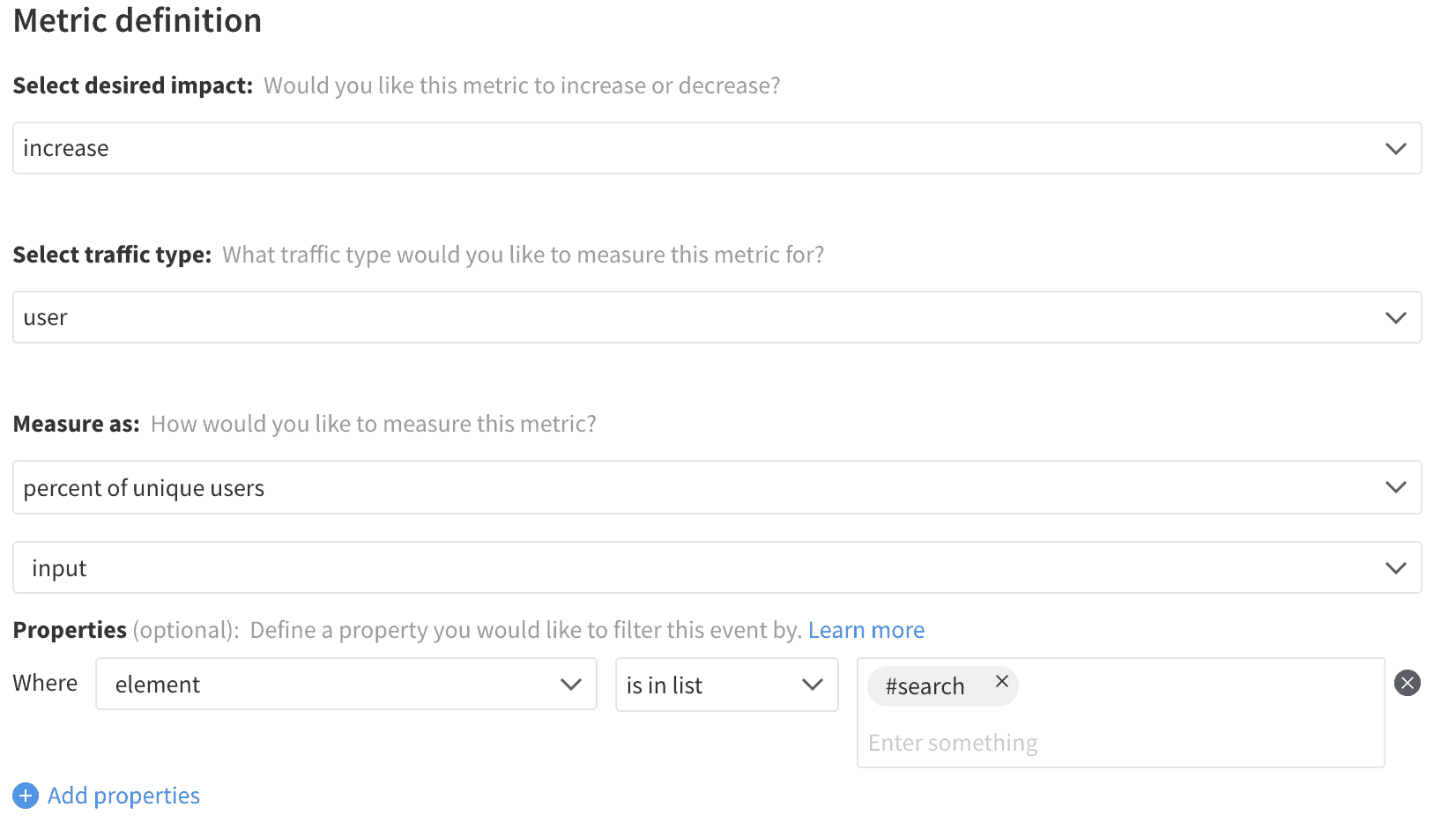

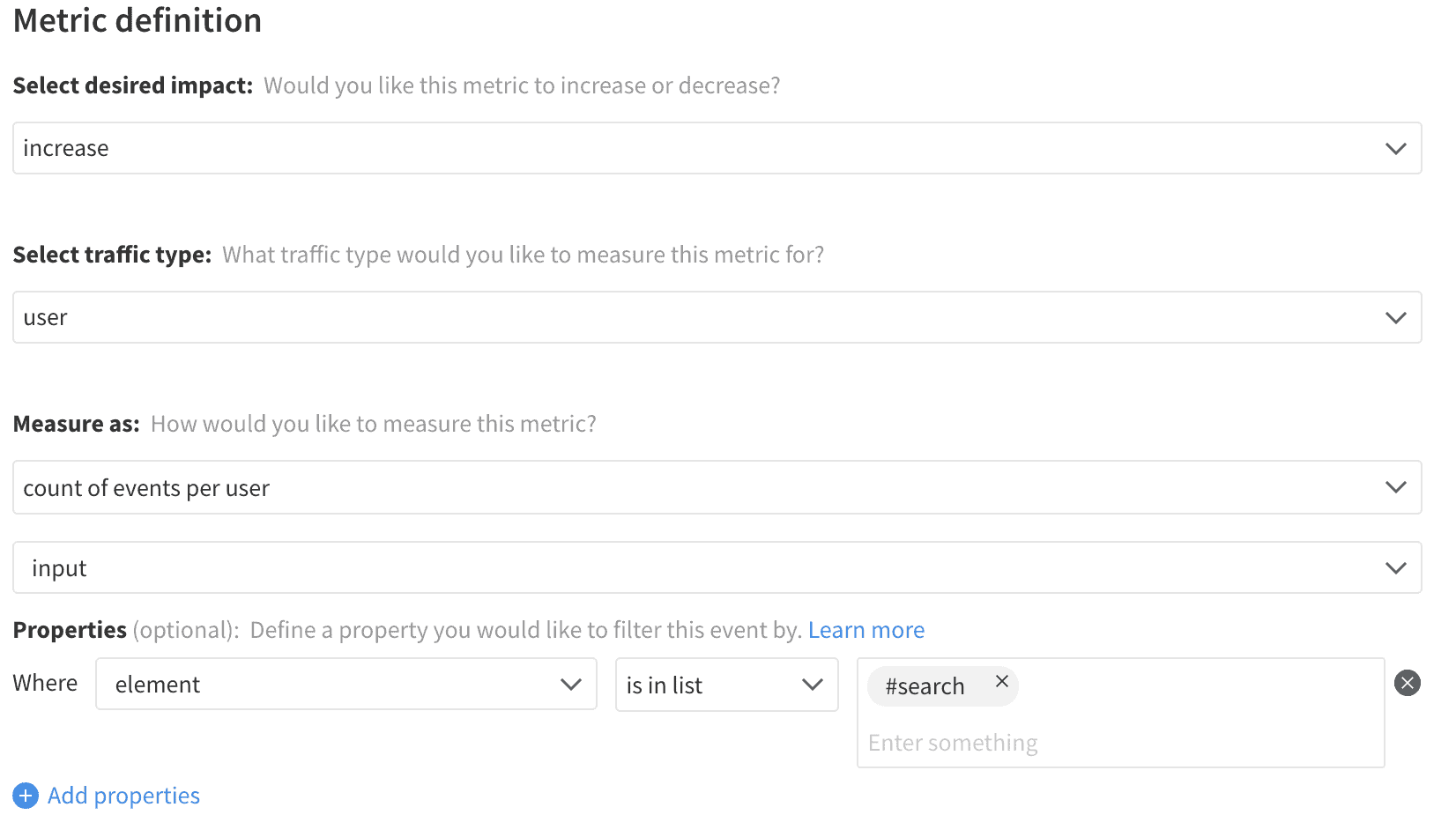

Any input field can change many times throughout a single form fill, whether due to the user adding more text, changing their decision, a malicious script, or a simple stuck key on a keyboard. Firing an event on every change does provide the ability for some subtle analysis, but most of the value can be captured by purely noting which fields received any interaction. Once a change has been detected for the element, that change can be recorded, and then the element can be ignored for the remainder of that page view or session. This reduces event bloat and simplifies metric creation. These change events should include properties for the input element and form identifiers to give specificity to the related metrics.

Input Rejection

Not all inputs have validation associated with them, but for those that do, it is helpful to track when the validation rejects a given entry. Repeated rejections can increase user frustration and indicate a lack of clarity of the input requirements. As most products use a dedicated library or server-side endpoint to handle input validation, these events are best fired manually as part of that logic rather than collected from a general trigger. These events should also include the element and form identifiers and can also include the rejection reason for cases where multiple validations occur.

Form Submission

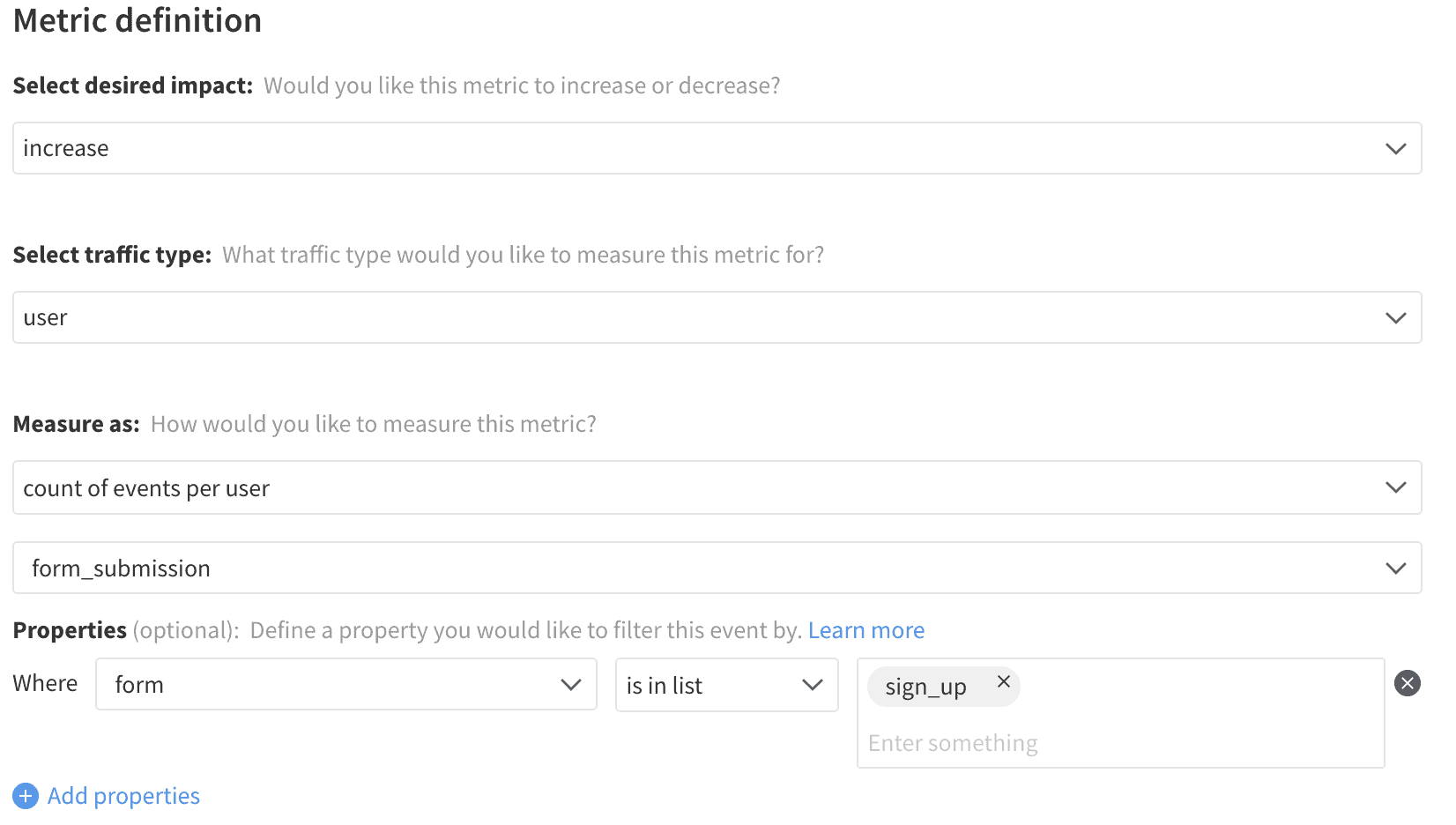

Whether through an HTML form submission, clicking a button, or simply no longer typing, there is almost always some trigger that utilizes the input selection to modify the application. This trigger can be captured to register the form’s completion and can include the inputted values of relevant form fields. As mentioned earlier, limit collected data to only purposeful and non-sensitive fields. For an exploration of tracking data and its use, refer to the satisfaction metrics.

Metrics

Measurement around input fields is similar to other user interactions. When those fields are a part of the product, then it is helpful to track the input rate and number of inputs performed by users to show the level of engagement that they have with those fields. When the fields are part of a larger form, that form can be thought of as a conversion funnel. Users progress from initial exposures, to starting input, to final submission of the form. Form abandonment is a handy metric to keep in mind, but it is challenging to prove a negative when measuring.

By tracking that submission rate as part of the funnel, that abandonment is also captured as the metric’s inverse (a submission rate of 60% is an abandonment of 40%). Identifying what percentage of users reach each step and how they flow from one step to the next can help determine the sources of drop-off in that flow. The fields completed by users can also help give context for how far in the flow a user went before dropping off, as some forms can present a high burden to complete. Tracking the number of submissions can be used to track engagement with forms that are part of the application usage and help identify issues where the application presents the same form multiple times to users when it should not (such as when running a customer survey).

The design of most inputs is to collect data for a particular product purpose, and so the content of those fields is not necessarily meaningful for tracking purposes. When collecting survey data or tracking specific feature usage, then the selections can be passed in with the form submission or save event and processed by looking for the occurrence of particular selections or by aggregating scores across users. Please refer to our section on satisfaction surveys for more information.

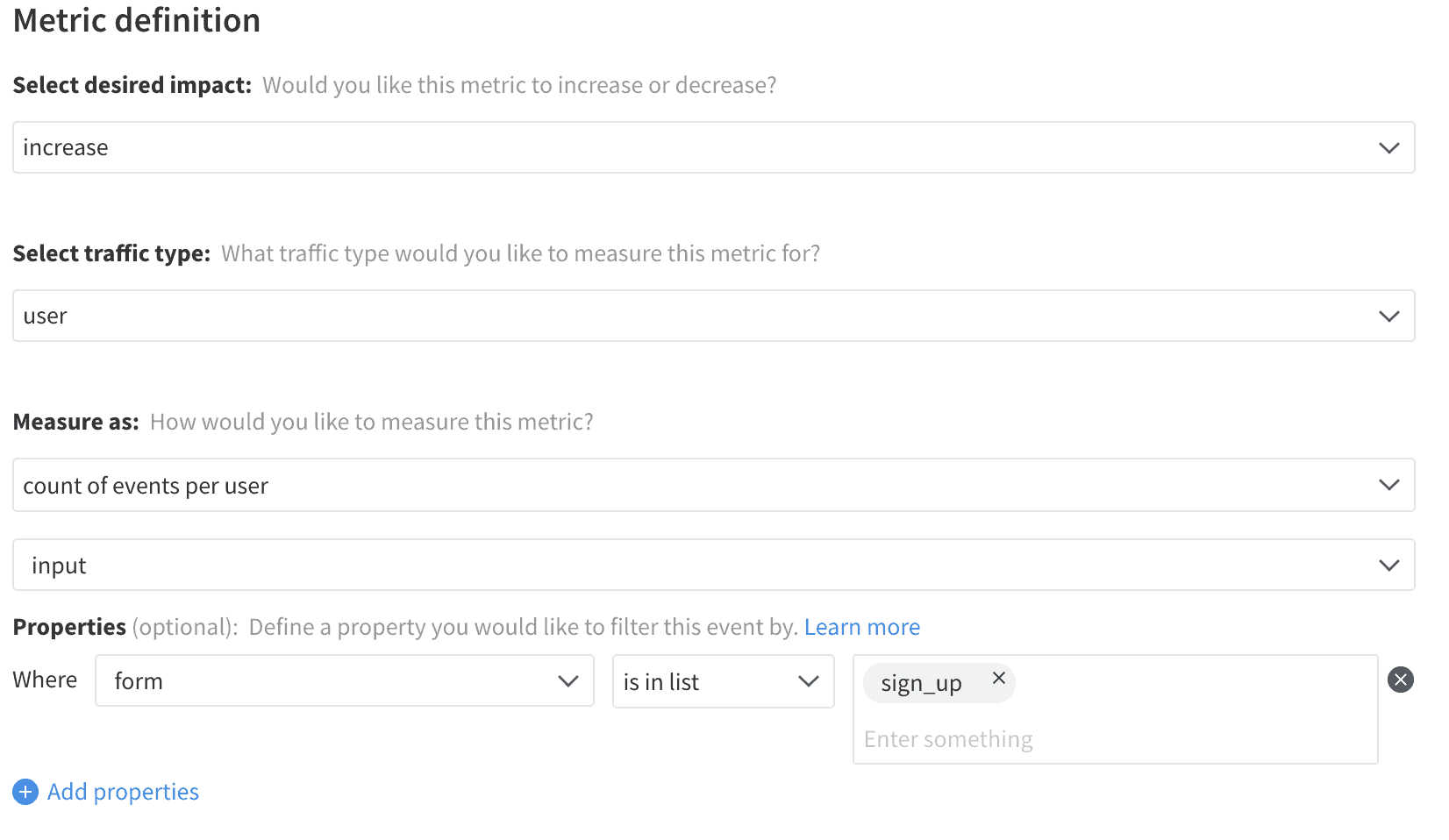

Input Rate

Input Count

Average Form Exposures

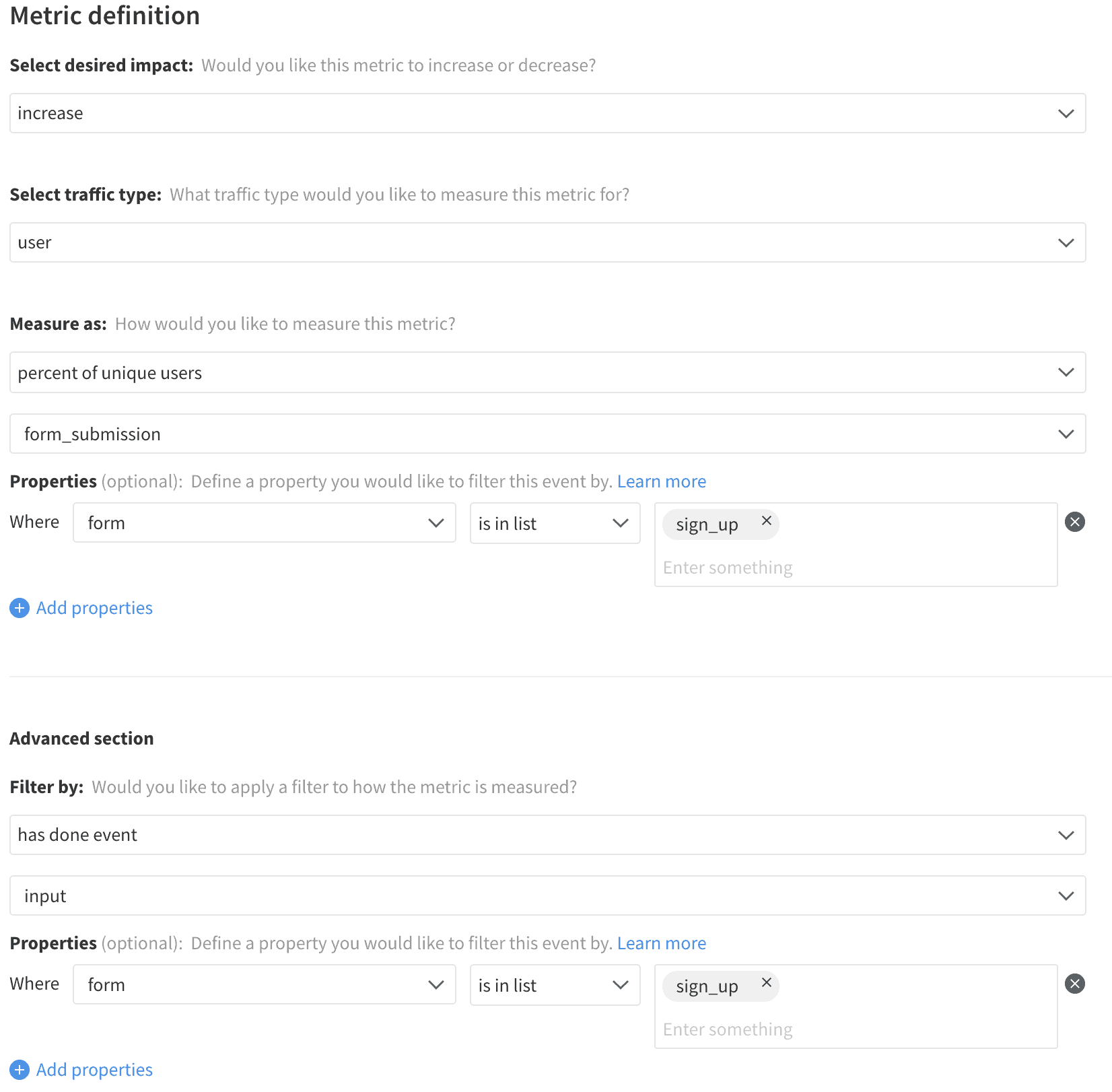

Form Start Rate

Fields Completed

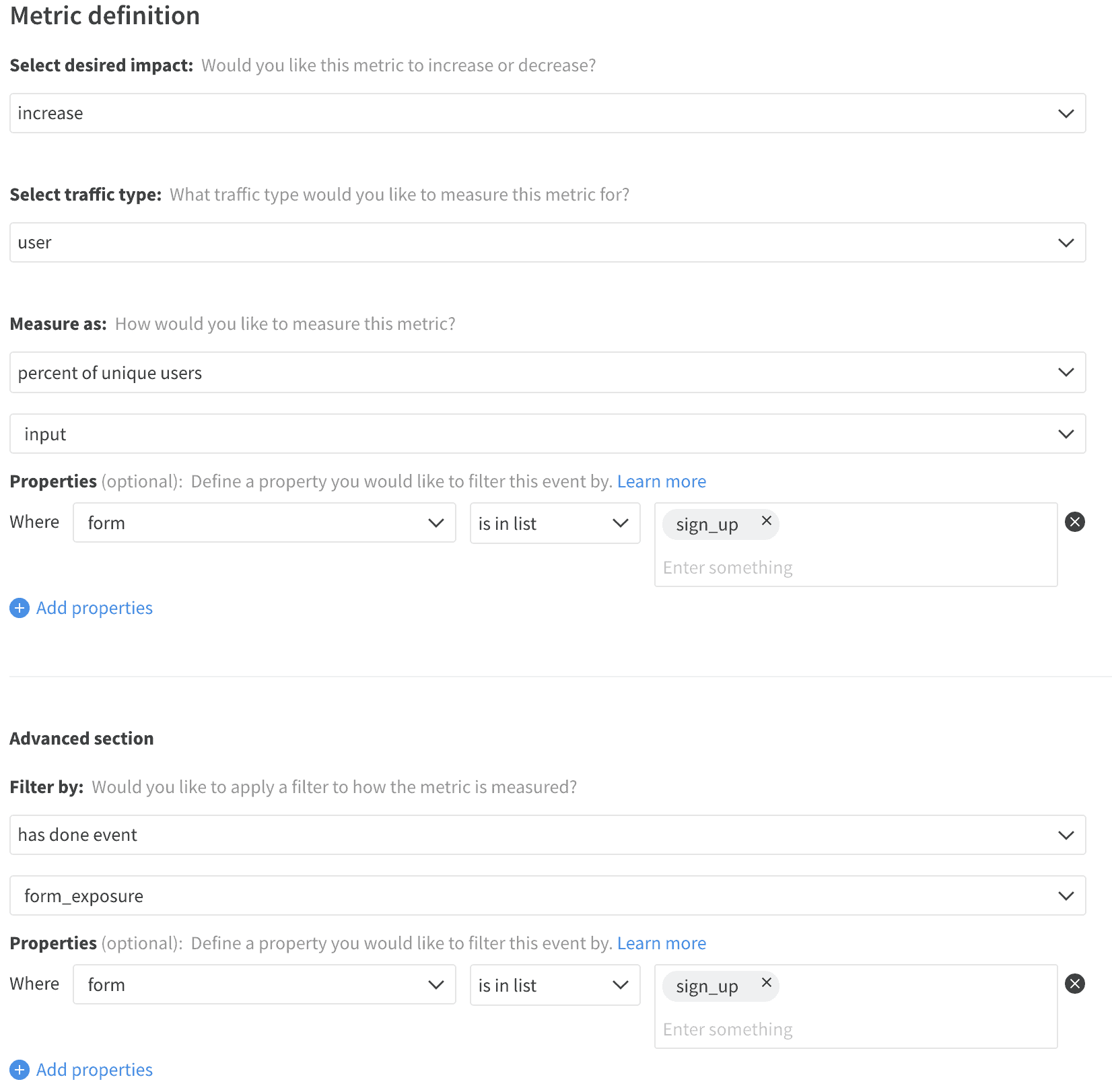

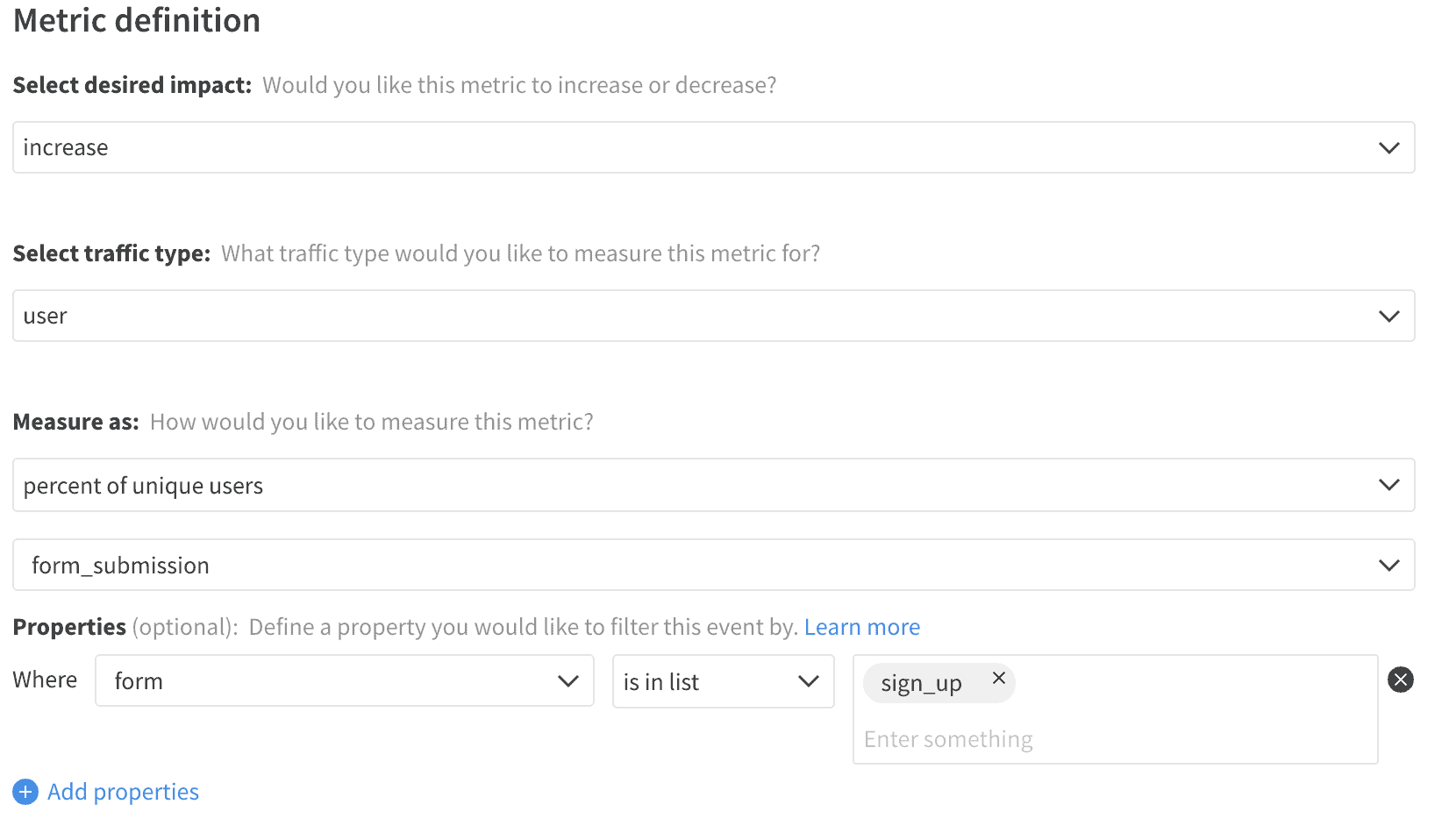

Submission Rate

Completion Rate

Submission Count