It has been a busy few weeks for us here at Split, having launched our new Feature Experimentation Platform in mid-November, and just last week adding our new Split Go SDK.

Right in the middle was AWS re:Invent, November 27-30. The Split booth at re:Invent attracted a ton of traffic. We gave away about 1000 t-shirts! As our t-shirts say, our message at the event was simple, but powerful, “Test Everything. Results Matter.”

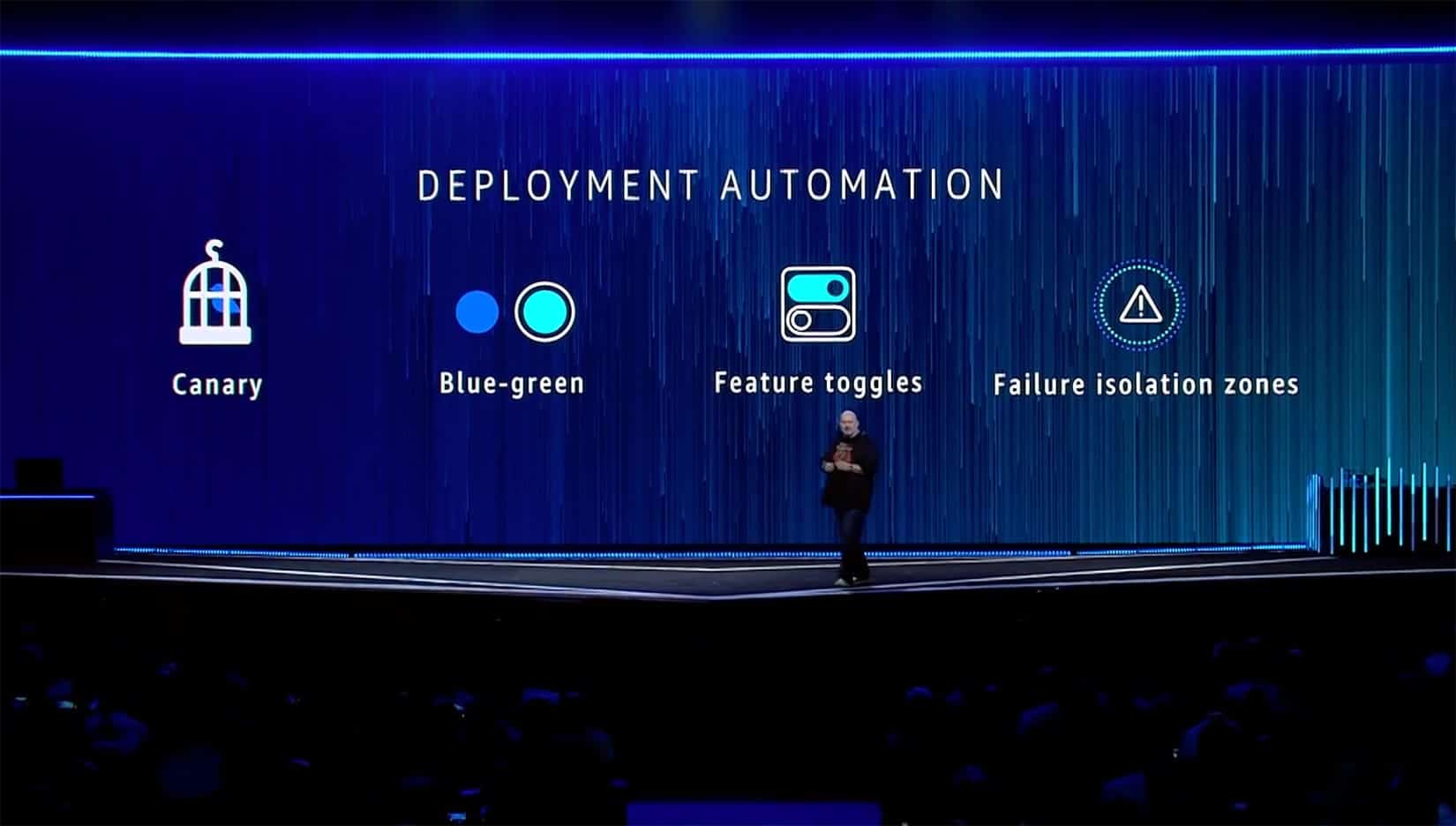

But before diving into our own message at re:Invent, it was exciting for us to see Amazon.com CTO Werner Vogels talking about our foundational technology. Split, at its foundation, uses a technology called “feature flags” or “feature toggles,” to control the visibility of features to users. Werner brought up feature toggles as one of best practices for automating deployments.

As Werner explains, “Feature toggles is the mechanism where you deploy the new code or the new system, but you don’t enable the new code. And you slowly start toggling on features one by one to see how they impact your customers.”

From Feature Flags to Feature Experimentation

Right after Werner talked about automation and resilience, he introduced Nora Jones, Co-author of the book “Chaos Engineering” and Senior Software Engineer at Netflix to the stage. Nora spoke about the famed processes that Netflix has in place to deliberately introduce failures into their systems to test resilience and failover.

Chaos testing for infrastructure resilience has become more commonplace for many leading online services and cloud applications. And, automated code testing as part of deployment is also commonplace. You might think that between testing infrastructure and testing code you have all your bases covered. However, there is a major missing link in this picture. What about customer experience? What about the impact of a code release to the business? Your code might deploy fine, but if the intended feature misses the mark or causes a poor customer experience, isn’t that just as much of a failure?

If you connect the dots between feature flags and a culture of testing and experimenting on everything, the net result is continuous feature experimentation. As we announced a few weeks ago, the Split Feature Experimentation Platform is bringing together these two worlds.

Bringing our Message to AWS re:Invent

We demoed our new feature experimentation platform at the event, and the response was overwhelming. Engineering leaders saw the value of controlling the blast radius of a new feature, by only exposing it to beta customers or even doing a percentage-based roll-out to users that match specific attributes. With that small of an exposure to the user base, the impact would not be easily measurable in APM tools. However Split ingests all the event data you care about, letting you see the impact key metrics like page load times for just the precise population that was exposed to a new feature.

No need to configure experiments across 2-3 different tools anymore. Split shows you all the metrics you care about in one place, automatically configured for an experiment.

At the same time, product leaders saw the value in seeing the impact of a new feature on things like product usage metrics, or even helpdesk or support tickets. This gives a product manager a complete view of the impact of a new feature, on both the direct metrics the feature was intended to impact along with broader metrics that matter to the organization as a whole.

Continuing the Conversation

We told a compelling story at re:Invent. Whether you stopped by our booth or not, we’re excited to keep the conversation going. Follow us on LinkedIn or Twitter to continue to get updates on what’s new in the platform.

If you didn’t catch it live, here’s a quick video of the demo.

Get Split Certified

Split Arcade includes product explainer videos, clickable product tutorials, manipulatable code examples, and interactive challenges.

Switch It On With Split

Split gives product development teams the confidence to release features that matter faster. It’s the only feature management and experimentation platform that automatically attributes data-driven insight to every feature that’s released—all while enabling astoundingly easy deployment, profound risk reduction, and better visibility across teams. Split offers more than a platform: It offers partnership. By sticking with customers every step of the way, Split illuminates the path toward continuous improvement and timely innovation. Switch on a trial account, schedule a demo, or contact us for further questions.